What I’ve Been Reading: Vol. 1

Other people's takes on how science is changing.

I enjoy when Substackers share what they’ve been reading (Brian Potter and Lauren Gilbert publish roundups I like); it’s a good way to find pieces I might have missed and gives me a glimpse at what other authors are thinking about. Now I’m paying it forward.

Here are four articles and one new website that have influenced my thinking recently. These pieces span a few questions I’ve been considering: how science is globalizing, how it’s shifting with new technology, and how we can better measure its benefits.

1. The Pop-Up Journal Initiative

The Alfred P. Sloan Foundation and Coefficient Giving launched this initiative to “curate and synthesize evidence around specific, policy-relevant questions” with the goal of delivering actionable evidence to decision-makers. This is intended to be a series of journals, and the topic of their first — what is the social return on R&D? — is neglected. Our knowledge about the returns to R&D has grown in recent decades, but we still need more granular evidence of what is driving returns, and in which sectors and areas of the world.

I’m intrigued by the project’s format. Like focused research organizations (FROs) and Renaissance Philanthropy’s time-bound funds, this pop-up journal will dedicate bounded time and funding to producing policy-relevant evidence. By providing new research funds, Sloan and Coefficient Giving will grow the community of researchers studying the returns to R&D (and those researchers will likely continue on that work after the journal, uh, pops down).

The journal is accepting applications for research grants through April 30, 2026 (I realize the deadline is very soon — but perhaps you have a good proposal lying around or can work quickly). They’ll provide $250,000 for studies that will “provide key empirical insight into the social and economic returns to R&D investment.” Larger requests may be considered for uniquely ambitious projects. Apply here.

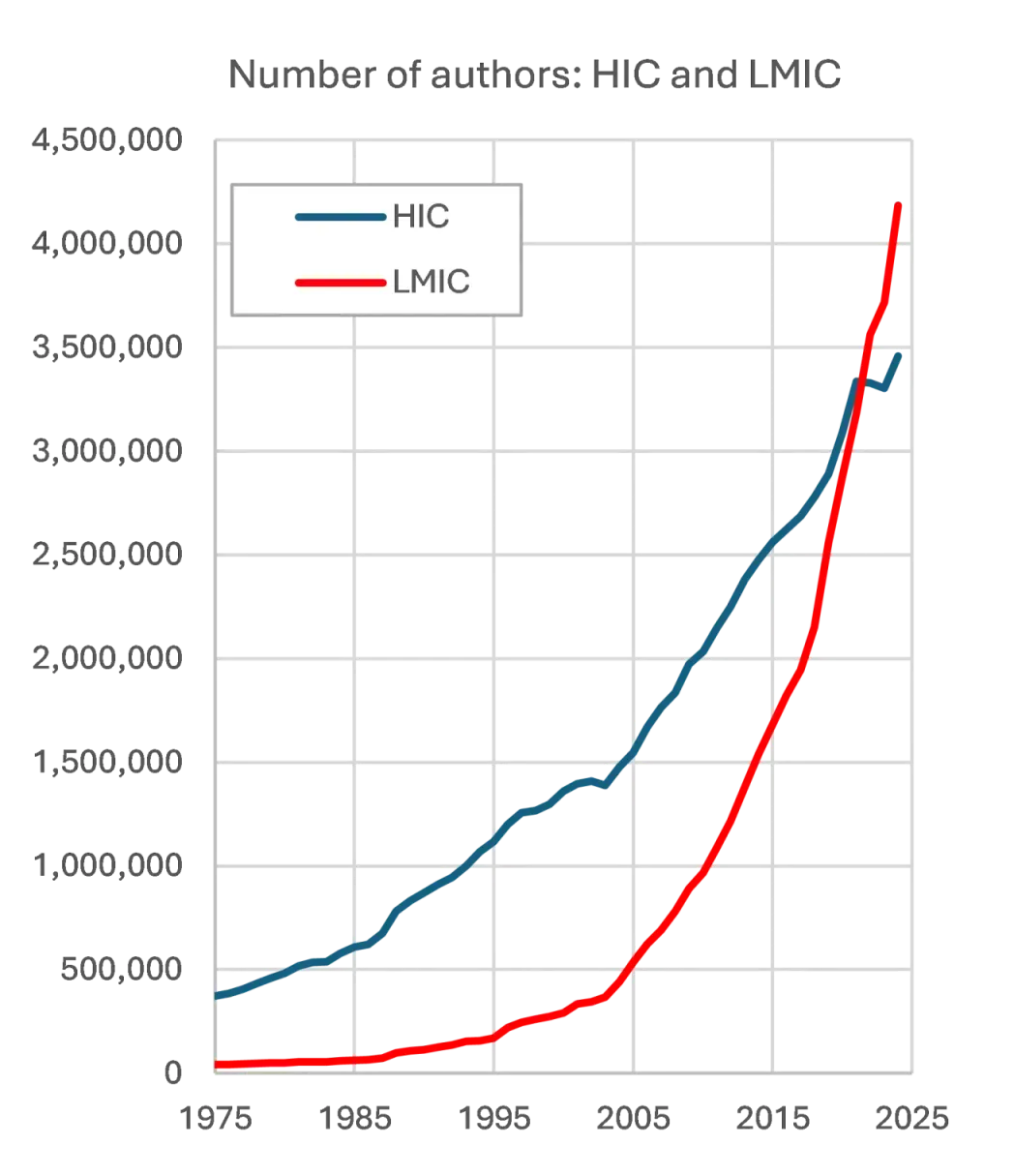

2. “Are we ready for a multipolar world of research?” (Carlos H Brito Cruz in the London School of Economics Impact blog)

Brito Cruz points out a blindspot in the debate on whether the US or China is the most scientifically advanced country: in the meanwhile, low and middle income countries (LMICs) have been building their scientific capabilities. For the first time ever, the majority of authors in the SCOPUS Abstract and Citation database are from LMICs. In my view, this piece is a story about (1) China, of course (still technically middle income), but also (2) developing countries investing in science, and (3) technology — from Zoom calls with co-authors to Google Translate — making it easier than ever for LMIC researchers to publish.

The piece ends, as more articles should, with a Bob Dylan quote and an exhortation to work harder:

“For the first time in history, there are more active researchers outside the richer countries…The impact this will have on the advancement of knowledge is only beginning to be grasped. Research institutions across the globe might do well to heed the words Bob Dylan wrote of the epochal changes of the 1960s, ‘you better start swimming, or you’ll sink like a stone.’”

3. “Does MAGA Actually Want American Science to Win?” (Ari Schulman in the New Atlantis)

What do rightwing science reformers actually want? What do they actually think would make American science stronger? Schulman argues that, while skeptics of the scientific status quo describe a destructive higher education reckoning as necessary, they don’t have a vision for what a better science would look like. Schulman writes:

“When asked how slashing support for science by about half, as the administration is proposing to do across its research funding agencies, will make American science stronger, the answer is always about how serious science’s ideological mistakes during Covid and the Great Awokening were, and how deserved and desirable is the correction — an obviously true claim that simply has nothing to do with the grave question being asked.”

4. “There is no randomising a technological revolution” (Oliver Hanney in Oliver’s Substack)

This piece has some echoes of Tim Hwang’s Macroscience piece about the difficulty of measuring what works in science during a rapid technological transformation, but shifts the focus to international economic development. International development has been a testbed for randomized controlled trials (RCTs) and other innovations in economics (some of which have been an inspiration for metascience), and the field has leaned on RCTs to validate that what we think is working is actually working. But with AI increasing the speed of social and economic change in LMICs, RCTs on — for example — job training may end up measuring how things used to be rather than how things are.

I agree with Hanney that, in a time of rapid change, economists should neither limit themselves to analyzing things that can be readily measured, nor keep quiet when they have evidence-informed views — even if they aren’t 100% sure of them.1 As Hanney notes, an economist’s intuition about what will happen to developing country labor markets or redistribution will likely be quite good relative to someone who doesn’t have any background.

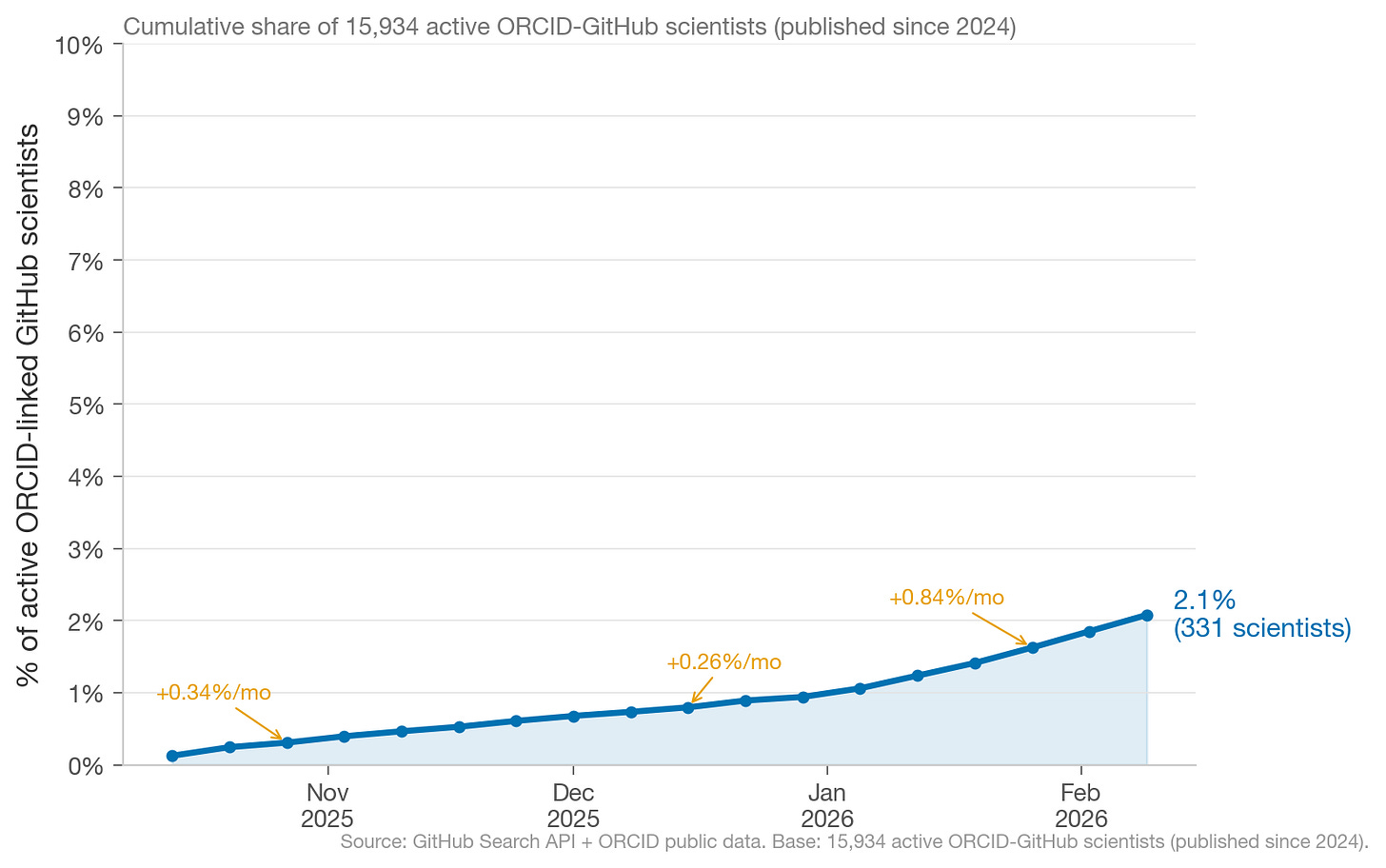

5. “How do scientists use Claude Code?” (Charles Yang in The Republic of Science)

We already know about common AI use cases (automating repetitive tasks, simplifying complex statistical projects, visualizing data, etc.). However, as Yang notes, we don’t actually know much about who is using advanced AI in academia, or how. But we should — AI has the potential to accelerate (and disrupt) research and as metascientists, and we need to understand the factors that influence scientific productivity.

Yang uses a clever approach of analyzing academics’ ORCID-linked GitHub profiles to study what kinds of scientists (seniority, location, etc.) are using Claude Code.

Around 2% of scientists with ORCID-linked GitHub profiles are using Claude Code. This is probably an undercount — there are likely plenty of scientists who are not connecting their Claude Code account to GitHub and their GitHub to ORCID.

I think we can go beyond Yang’s use of ORCID to see who is using Claude Code, and reach a larger, more representative sample of scientists. This is an area where good, old-fashioned survey research could be useful (the UK is doing something similar), to ask, for example, which AI tools US academics have heard of, which they use, and for what.

Per the streetlight effect, we shouldn’t look for our keys under the street lamp just because that’s where there’s more light.